I’ve slowed down with posting here, been working hard recently both on diStorm and real life job. Hopefully I will be able to release diStorm3 soon (matter of weeks for a start). I’m almost finished with coding everything I wanted, though I’m still left with the features you guys asked for and tons of tests since I also changed lots of code and added new AVX instruction set.

Archive for the ‘diStorm’ Category

Short Update

Sunday, December 27th, 2009It’s Vexed :)

Tuesday, November 17th, 2009In the last few week I’ve been working on diStorm to add the new instruction sets: AVX and FMA. You can find lots of information about them on the inet. In a brief, the big advantages, are support of 256 bit registers, called now YMM’s (their low halves are XMM’s) and also support for AES built in, you have a few instruction to do small block encryption and decryption, really sweet. I guess it will help some security companies out there to boost stuff. Also the main feature behind these instruction sets is the 3 registers operands. So now you are not stuck with 2 registers per instruction, you can have up to four sometimes. This is good because you have two source operands and a destination operand, which means it saves you other instructions (to move or backup registers) and you don’t have to ruin your dest-src operand like in the old sets. Almost forgot to mention FMA itself, which is fused multiply-add instructions, so you can do two operations at once, like A*B+C, etc.

I wanted to talk about the VEX prefix itself. It’s really a new design and approach to prefixes that was never seen before. And the title of this post says it all, it’s really annoying.

The VEX (Vector Extension) prefix, is a multi-byte prefix for a change. It can be either 2 or 3 bytes. If you take a look at the one byte opcodes map, all of them are taken. Intel was in the need of a new unused byte, which didn’t really exist. What they did instead, was to share two existing opcodes, for each prefix. The sharing works in a special way, that let them know if you meant to use the original instruction or the new prefix. The chosen instructions are LDS (0xc4) and LES (0xc5). When you examine the second byte of these instructions, the byte upon which the new information is extracted from, you can learn that the most significant two bits can’t be set together (I.E: the value of 0xc0 or higher). However, if they are set, the processor will raise an illegal instruction exception. This is where the VEX prefix enters into the game. Instead of raising an exception they will be decoded as this special multi-byte prefix. Note that in 64 bits mode, all those Load-Segment instruction are invalid, so there is no need for sharing the opcode. When you encounter 0xc4 or 0xc5, you know it’s a VEX prefix, as simple as that. Unfortunately this is not the case in 32 bits mode, and since the second byte has to be with a value higher than 0xc0 (because the two most significant bits have to be set in 32 bits), the field in these corresponding bits is inverted actually, which means you will have to extract a few bits that represent some fields and bitwise-not them. This is seriously gross, but it seems Intel didn’t have much of a choice here. If it were up to me, I would do the same eventually, for the sake of backward compatibility, but it doesn’t make it any prettier to be honest. And for your information, AMD pulled the same trick but with the POP instruction (0x8f) for their new instruction sets (XOP, etc), without full backward compatibility.

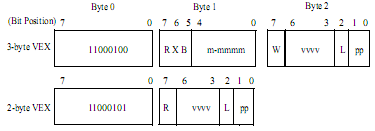

To make some order in the bits let’s have a look at the following figure:

Cited Intel

Well, I am not going to talk about all fields, (which somewhat are similar to REX for 64 bits) but just about one interesting feature. Since now the prefix is 2/3 bytes and usually an SSE instruction is at least 3 bytes, this will explode the code segment with huge instruction, and certainly gonna make the processor cry a lot to fetch instructions. The trick that was used by Intel (and AMD too) was to have a field that will imply which prefix byte to put virtually before the VEX prefix itself, so this way we saved one byte. And the same idea was used again to spare the 0x0f escape byte or even two bytes of 0x0f, 0x38 or 0x0f, 0x3a which are very common basic opcodes for SSE instructions. So if we had to use an SSE instruction, for instance:

66 0f 38 17 c0; PTEST XMM0, XMM0 – has first 3 bytes that can be implied in the VEX prefix, thus it stays the same size! Kawabanga

I talked in an earlier post that I am not going to support SSE5 as for now, in the hope it’s gonna die. I believe CPU (or instruction set architectures, to be accurate) wars are bad for the coders and even for end users who can’t really enjoy those great technologies eventually.

A BSWAP Issue

Saturday, November 7th, 2009The BSWAP instruction is very handy when you want to convert a big endian value to a little endian value and vice versa. Instead of reversing the bytes yourself with a few moves (or shifts, depends how you implement it), a single instruction will do it as simple as that. It personally reminds me something like the HTONS (and friends) in socket programming. The instruction supports 32 bits and 64 bits registers. It will swap (reverse) all bytes inside correspondingly. But it won’t work for 16 bits registers. The documentation says the result is undefined. Now WTF, what was the issue to support 16 bits registers, seriously? That’s a children’s game for Intel, but instead it’s documented to be undefined result. I really love those undefined results, NOT.

When decoding a stream in diStorm in 16 bits decoding mode, BSWAP still shows the registers as 32 bits. Which, I agree can be misleading and I should change it (next ver). Intel decided that this instruction won’t support 16 bits registers, and yet it will stay a legal instruction, rather than, say, raising an exception of undefined instruction, there are known cases already when a specific destination register can cause to an undefined instruction exception, like MOV CR5, EAX, etc. It’s true that it’s a bit different (because it’s not the register index but the decoding mode), but I guess it was easier for them to keep it defined and behave weird. When I think of it again, there are some instruction that don’t work in 64 bits, like LDS. Maybe it was before they wanted to break backward compatibility… So now I keep on getting emails to fix diStorm to support BSWAP for 16 bits registers, which is really dumb. And I have to admit that I don’t like this whole instruction in 16 bits, because it’s really confusing, and doesn’t give a true result. So what’s the point?

I was wondering whether those people who sent me emails regarding this issue were writing the code themselves or they were feeding diStorm with some existing code. The question is how come nobody saw it doesn’t work well for 16 bits? And what’s the deal with XCHG AH, AL, that’s an equal for BSWAP AX, which should work 100%. Actually I can’t remember whether compilers generate code that uses BSWAP, but I am sure I have seen the instruction being used with normal 32 bits registers, of course.

diStorm3 – Call for Features

Friday, October 2nd, 2009[Update – diStorm3 News]

I have been working more and more on diStorm3 recently. The core code is already written, and it works so great. I am still not going to talk about the structure itself that diStorm uses to format the instructions. There are two API’s now, the old one, which takes a stream and formats it to text and a newer one, which takes a stream and formats it into structures. This one is much faster. Unlike diStorm64, where the text-formatting was coupled in the decoding code, it’s totally separated. For example, if you want to support AT&T syntax, you can do it in a couple of hours or less, really. I don’t like AT&T syntax, hence I am not going to implement it. I bet still many people don’t know how to read it without confusing…

Hereby, I am asking you guys to come up with ideas for diStorm3. So far I got some new ideas from people, which I am going to implement. Such as:

1) You will be able to tell the decoder to stop on any flow control instruction.

2) Instructions are going to be categorized, such as, flow-control, data-control, string instructions, io, etc. (To be honest, I am still not totally sure about this one).

3) Helper macros to extract data references. Since diStorm3 outputs structures, it’s really easy to know if there’s a data reference and its address. Therefore some macros will aid to do this work.

4) Code reference, – continues to next instruction, continues to a target according to a condition, or jump-always and call-always.

I am looking to hear more suggestions from you guys. Please be sure you are talking about disassembler features, and not other layers which use the disassembler.

Just wanted to let you know that diStorm3 is going to be dual licensed with GPL and commercial. diStorm64 is deprecated and I am not going to touch it anymore, though it’s still licensed as BSD, of course.

Arkon Under the Woods

Saturday, May 2nd, 2009Yeah, I am not alright, I am even an ass, leaving you all (my dear readers, are there any left?) without saying a word for the second time. At the end of last year I was in South East Asia for 3 months, and now I am in South America for 3 months and counting… It is just that I really wish to keep this blog totally technological, but I guess we are all human after all. So yeah, I have been trekking alot in Chile and Argentina (mostly in Patagonia) and having a great time here, now in Buenos Aires. Good steaks and wines, ohhh and the girls. Say no more.

It is really cool that almost every shitty hostel you go, you will find a WiFi available for free use. So carrying an Ipod touch with me I can actually be online, but apparently not many web developers think about Mobile web pages and thus I couldnt write blog posts with Safari, because there is some problem with the text area object. For some reason, I guess some JS code, doesnt run well on the Ipod and I dont get that keyboard thingy up and cannot type in anything, wordpress…

I am always surprised again to see how many computers here, in coffee-shops or just Internet shops, are not really secured. You run as admin some of the times. And there are not anti virus, which I think are good for the average users. And if you plug in your camera to upload some pictures, the next time you will see some stupid new files on it, named: desktop.ini and autorun.inf, sounds familiar? And then I read some MS blog post about disabling AutoRun for removable storage devices..yipi, about time. What I am also trying to say, that one can easily create a zombies army so easily with all those computers… the ease of access and no protection drives me mad.

Anyhow, I had some free time, of course, I am on a vacation, sort of, after all. And I accidentally reached some amazing blog that I couldnt stopped reading for a few days. Meet NO EXECUTE! If you are low level freaks like me, you will really like it too, although Darek begins with hardware stuff, which will fill some gaps for most people I believe, he talks about virtualizations and emulators (his obsession), and I just read it like some fantasies book, eager to get already to the next chapter everytime. I learnt tons of stuff there, and I really like to see that even today some few people still measure and check optimizations in cycles per instructions rather than seconds or MS. One of the stuff I really liked there was a trick he pulled when the guest OS runs on little endian, for instance, and the host OS runs on big endian. Thus every access to memory has to be swapped when the size of the access is more than 2 bytes, of course. Therefore, in order to eliminate the byte swaps, which is expensive, he kinda turned all the memory of the guest OS upside down, and therefore the endianity changed as well. Now it might sound as a simple matter, but this is awesome, and the way he describes it, you can really feel the excitment behind the invention… He also talks about how lame Intel and AMD are to come up with new instruction sets every Monday, which I already mentioned also in the past.

Regarding diStorm now, I decided that I will discontinue the development of the current diStorm64 version. But hey, dont worry. I am going to open source diStorm3 and I still consider making it dual licensed. The benefits of diStorm3 are structure output, and believe me, the speed is amazing and like the good old days, the structure per instruction is unbelieable tiny in size (relative to other disassemblers I saw out there), and you guys are gonna like it.

Thing is, I have no idea when I am getting home…Now with this Swine Flu spreading like hell, I dont know where I will end up. The only great thing about this Swine Flu, so to speak, is that you can see the Evolution in Progress.

Salud

Instructions’ Prefixes Hell

Sunday, December 21st, 2008Since the first day diStorm was out people didn’t know how to deal with the fact that I drop(ignore) some prefixes. It seems that dropping unused prefixes isn’t such a great feature for many people and it only complicates the scanning of streams. Therefore I am thinking about removing the whole mechanism, or maybe change it in a way that still preserves the same interface but behaves differently.

For the following stream: “67 50”, the result by diStorm will be: “db 0x67” – “push eax”. The 0x67 prefix supposes to change the address size, which none is used in our case, thus it’s dropped. However, if we look at the hex code of the “push eax” part we will see “67 50”. And this is where most of the people become dumbfounded. Getting twice the same prefix-byte of the stream in two results is in a way confusing. Taking a look at other disassemblers will tell you that diStorm is not the only one to do such games with prefixes. Sometimes I get emails regarding this “impossible” prefix – since it gets to be output twice, which is wrong, right? Well, don’t know, it depends how you choose to decode it. The way I chose to decode prefixes was really advanced, each prefix could have been ignored, unless it has really affected (one of) the operand itself. I had to really keep tracking on each prefix and know whether it affected any operands in the instructions and only then I examined which prefixes I drop or not. This all sounds right in a way. Hey, at least for me.

However, we didn’t even talk about what you will do if you have multiple prefixes of the same family (segment-overide: DS, ES, SS, etc). Now this one is really up to interpretations of the designer. Probably the way I did it in diStorm is wrong, I admit it, that’s why I want to rewrite the whole prefixes thing from the beginning. There are 4 or 5 types of prefixes and according to the specs (Intel/AMD) I quote: “A single instruction should include a maximum of one prefix from each of the five groups.” …. “The result of using multiple prefixes from a single group is unpredictable.”. This pretty much sums all the problems in the world related to prefixes. I guess you can see for yourself from these 2 lines you can actually treat them in many different ways. We know now that it can lead to “unpredictable” results if you have many prefixes – in reality it won’t shut down your CPU, it won’t even throw an exception. So screw it you say, and you’re right. Now let’s see some CPU (16 bits) logic for decoding the prefixes:

while (prefix byte is read) {

switch (prefix): {

case seg_cs: use_seg = cs; break;

case seg_ds: use_seg = ds; break;

case seg_ss: use_seg = ss; break;

….

….

case op_size: op_size = 32; break;

case op_addr: op_addr = 32; break;

case rep_z: rep = z; break;

…

}

– skip byte in stream –

}

The processor will use those flags in order to know which prefix was presented or not. The thing about using a loop (in any form) is that now that you have to show text out of some streams with many prefixes, you don’t know whether the processor really uses the first occurrance of the prefix or its last, or maybe both? And maybe Intel and AMD implement it differently?

You know what? Why the heck do I bother so much with some minor end cases that never really happen in real code sections. I ask myself too, maybe I shouldn’t. Although I happened to see for myself some malware code that tries to screw up the disassembler with many extra prefixes, etc.. and I thought diStorm could help malware analyzers as well with advanced prefixes decoding.

Anyways, according to the above logic code I’m supposed to use the last prefix of each type. Given a stream such as: 66 66 67 67 40. I will get:

0: 66 (dropped)

2: 67 (dropped)

1: 66 67 40

Now you can see that the prefixes used are the second and the fourth and that the instruction starts at the second byte on the stream. Now I officially can commit a suicide, even I can’t follow these addresses, it’s hell. So any better solution?

Software That Uses diStorm

Sunday, August 24th, 2008After a few years that diStorm is out, we can already see it used here and there. Although most users are private users rather than commercial, but even commercial applications use diStorm. I guess many people also use it internally in their companies, but without their word I can’t really know about it. Except some friends who tell me so.

It’s pretty cool that you write something useful which people actually use, and to save commercial use. That was my main reason to release diStorm under the permissive BSD license. The problem arises when there is some commercial applications which don’t give credit for your work, it’s really frustrating and I guess one can’t do much about it. There is this Vietnamic BKV Pro anti virus software that claimed to be written by professors and students (or the like), so I didn’t really expect no credit from such people. But this is our world :( I got an email from an advocate about diStorm’s copyright infrigement. It seems they also abuse WinRAR’s license, so I’m not the only one.. To be honest, I prefer they stop using diStorm immediately rather than not giving me my credit. There are other disassembler libraries out there, they could use them as well. On the other hand, I’m happy to know they use diStorm, but I only ask for recognition, nothing else, after all the hard work I put there. I emailed them but to no response. This licenses’ violation from the AV guys seem to make a lot of noise in Vietnam blogs and forums, though I can’t really understand anything, except where they quote diStorm’s license or saying my name. I haven’t yet contacted OSI, and I’m not sure if they can really help, but it’s worth the try.

Anyway, there are good people who does give credit and I decided it’s about time I will show a small list of users. The first one though goes to a good friend I met through diStorm, who reported many bugs and helped in testing the 64bits environment (do not confuse with AMD64) support, Sanjay Patel. He works(/founder) at RotateRight.com which released last month their Zoom product, which is a very smart Profiler, currently only for Linux though. The product is free for 30 days trial version, you should check it out, it seems to be very promising, because I know more guys behind this product, although I haven’t tested it myself. But hey, it uses diStorm :)

More products which use diStorm:

Apple Shark Profiler

SolidShield – server side protector

DFSee – Low Level disk tools

And some open source projects:

Crypto Implementations Analysis Toolkit

Well, that’s what I’m aware about at least, I believe there are more though.

Have fun :)

Testing The VM

Saturday, March 22nd, 2008Since Imri and I started working on this huge project, we finally got to a situation where we got a few layers ready: disassembler to structure output –> structure to expression tress –> VM to run those expressions. This is all for the x86 so far, but the code is supposed to be generic, that’s why there’s the expression trees infrastructure from the beginning, so you can translate any machine code to this language and then the same VM will be able to run it. Sounds familiar in a way? Java, Ahm Ahm…

Imri has described some of our work in his last blog post, where you can find more information on what’s going on. But now that we got the VM somewhat working, my next step is to check all layers, that is, running a piece of code (currently assembly based) and see that I get the same results after running it for real. So after getting the idea of inline-assembly in Python, yes yes :) you heard me right. However, thanks to a friend who hasn’t published it yet. I changed the idea so instead of inline assembly I have a function that takes a chunk of assembly text and runs it both on the VM and natively under Python. Then when both are done executing, I check the result in EAX and see if they match… EAX can hold anything, like the EFLAGS and then I can even see if the flags of an operation like INC were calculated well in my VM…

The way I run the code in Python natively is by using ctypes. I wrote a wrapper for YASM and now I can compile a few assembly lines and then the decomposer (disassembler to expressions layer) is fed with the output of machine code, which is being ran in the VM. Then I take ctypes to run a raw buffer of code, using WINFUNCTYPE and the address of the buffer of the binary code, which I can then execute as simple as calling that instance. And I run it natively inside Python’s own thread’s context. The downsides are that I’m capped to 32 bits (since I’m on 32 bits environment), where x86 can be 16, 32 or 64 bits. Thus, I can’t really check 16 and 64 bits at the moment. More disadvantage are that I can’t write anywhere in memory for the sake of it, I need to allocate a buffer and feed my code with the pointer to it, unlike in the VM where I can read and write from anywhere I wish (it doesn’t imitate a PC). And I better not raise any exceptions whether intantionaly or not, because I will blow up my own Python’s process. ;) So I have to be a good boy and do simple tests like: mov al, 0x7f; inc al. and then see if the result is 0x80 and whether OF is set for example. This is really amazing to see the unit test function returns true on such a function :) So on the way we find bugs in the upper layers that sit above diStorm (which is quite stable on its own) and fixing them immediately and then retrying the unit tests. While I add more unit tests and fix more things. Eventually the goal is to take an inline C compiler and run the code and see the result. I’m interested in checking specific instructions at this phase of the project to know that everything we are based on works great, and then we can go with bigger blocks of code, doing fibunaci and other stuff in C…

I am a bit annoyed about the way that I run the code natively. I mean, I trust the code since I write it myself and there are only simple tests. But I can screw up the whole process by dividing by zero for example. I have to run the tests on an x86 machine with 32 bits environment, so I can’t really check different modes. The good thing is that I can really use the stack, as long as I leave it balanced when I end the test (like recovering all regs, etc)… Though I wish I could have run the tests in a real v86 processor or something like that, it won’t be portable with Linux, etc… Even spawning another process and inject the code inside will require some code for Windows and for Linux. And even then if the code is bogus, I can’t really control everything, it will require more work per OS as well. So for now the infrastructure is pretty cool and it gives me what I need. But if you have any idea of how to better doing it, let me know.

Anti Debugging

Monday, January 14th, 2008I found this nice page about Anti-Debugging tricks. It covers so many of them and if you know the techniques it’s really fun to read it quickly one by one. You can take a look yourself here: Window Anti-Debug Reference. One of the tricks really attracted my focus and it was soemthing like this:

push ss

pop ss

pushf

What really happens is that you write to SS and the processor has a protection mechanism, so you can safely update rSP immediately as well. Because it could have led to catastrophic results if an interrupt would occur precisely after only SS is updated but rSP wasn’t yet. Therefore the processor locks all interrupts until the end of the next instruction, whatever it is. However, it locks interrupts only once during the next instruction no matter what, and it won’t work if you pop ss and then do it again… This issue means that if you are under a debugger or a tracer, the above code will push onto the stack the real flags of the processor’s current execution context.

Thus doing this:

pop eax

and eax, 0x100

jnz under_debugging

Anding the flags we just popped with 0x100 actually examines the trap flag which if you simply try to pushf and then pop eax, will show that the trap flag is clear and you’re not being debugged, which is a potential lie. So even the trap flag is getting pended or just stalled ’till next instruction and then the debugger engine can’t get to recognize a pushf instruction and fix it. How lovely.

I really agree with some other posts I saw that claim that an anti-debugging trick is just like a zero-day, if you’re the first to use it – you will win and use it well, until it is taken care of and gets known. Although, to be honest, a zero-day is way cooler and another different story, but oh well… Besides anti-debugging can’t really harm, just waste some time for the reverser.

Since I wrote diStorm and read the specs of both Intel and AMD regarding most instructions upside down, I immediately knew about “mov ss” too. Even the docs state about this special behavior. But it never occurred to me to use this trick. Anyway, another way to do the same is:

mov eax, ss

mov ss, eax

pushf

A weird issue was that the mov ss, eax, must really be mov ss, ax. Although all disassemblers will show them all as mov ss, ax (as if it were in 16 bits). In truth you will need a db 0x66 to make this mov to work… You can do also lots of fooling around with this instruction, like mov ss, ax; jmp $-2; and if you single step that, without seeing the next instruction you might get crazy before you realize what’s going on. :)

I even went further and tried to use a priviliged instruction like CLI after the writing to SS in the hope that the processor is executing in a special mode and there might be a weird bug. And guess what? It didn’t work and an exception was raised, of course. Probably otherwise I won’t have written about it here :). It seems the processors’ logic have a kind of an internal flag to pend interrupts till end of next instruction and that’s all. To find bugs you need to be creative…never harm to try even if it sounds stupid. Maybe with another privileged instruction in different rings and modes (like pmode/realmode/etc) it can lead to something weird, but I doubt it, and I’m too lazy to check it out myself. But imagine you can run a privileged instruction from ring3…now stop.

About DIV, IDIV and Overflows

Thursday, November 8th, 2007The IDIV instruction is a divide operation. It is less popular than its counterpart DIV. The different between the two is that IDIV is for signed numbers wheareas DIV is for unsigned numbers. I guess the “i” in IDIV means Integer, thus implying a signed integer. Sometimes I still wonder why they didn’t name it SDIV, which is much readable and self explantory. But the name is not the real issue here. However, I would like to say that there is a difference between signed and unsigned division. Otherwise they wouldn’t have been two different instructions in the first place, right? :) What a smart ass… The reason it is necessary to have them both is because signed division is behaving differently than unsigned division. Looking at a finite string of bits (i.e, unsigned char) which has a value of -2 and trying to unsigned divide that by -1, will result in 0, since if we take a look at the numbers as unsigneds – 0xfe and 0xff. And naively asking how many times 0xff is contained inside 0xfe, will result in 0. Now that’s a shame because we would like to treat the division as signed. For that, the algorithm is a bit more complex. I am really not a Math guy. So I don’t wanna get into dirty details of how the signed division works. I will leave that algorithm for the BasicOps column of posts… Anyway, I can just say that if you have an unsigned division you can use it to do a signed division of the same operands size.

Some processors only have signed division instructions. So for doing an unsigned division, one might convert the operands to the next bigger size and then do the signed division. Which means the high half of the operand is zero, which makes the division work as expected.

With x86, luckily we don’t have to do some nifty tricks, we have them straight away, DIV and IDIV, for our use. Unlike multiplication, when there is an overflow in division, a division overflow will be raised, wheareas in multiplication only the CF and OF flags will be set. If we like it or not this is the situation. Therefore it’s necessary to convert the numbers before doing the operation. Sign extension or zero extension (depending on the signedness of operands) and only then do the division operation.

What I really wanted to talk about is the way the overflow is detected by the processor. I am interested in that behavior since I write a simple x86 simulator as part of the diStorm3 project. So truly, my code is the “processor” or should I say the virtual machine…Anyhow, the Intel documentation for the IDIV instruction shows some psuedo algorithm:

temp = AX / src; // Signed division by 8bit

if (temp > 0x7F) or (temp < 0x80)

// If a positive result is greater than 7FH or a negative result is less than 80H

then #DE; // Divide error

src is a register/immediate or a memory indirection, which results in a 8bits value that will be signed extended to 16bits and only then will be signed divided by AX. So far so good, nothing special.

Then comes some stupid looking if statement. Which on the first look says, that if temp is 0x7f or 0x80 then bam, raise the exception. So you ask yourself how these special values have anything to do with overflowing.

Reading on the next comment makes things clearer, since for 8bits input, the division is done on 16bits, and the result is stored inside 8bits that are signed values, the result can vary from -128 to 127. Thus, if the result is positive, and the value is above 127, there is an overflow, because then the value will be treated as a negative number, which is a no no. And same for negative results: if the result is negative and the value is below 128 there is an overflow. Since the negative number cannot be represented in 8bits and as a signed number.

It is vital to understand that overflow means that a resulting value cannot be stored inside its destination because it’s too low or too big to be represented in that container. Don’t confuse it with carry [flag].

So how do we know if the result is positive or negative? If we take a look at temp as a byte sized, we can’t really know. But that’s why we got temp as 16bits. That extra half of temp (high byte) is really the hint for the sign of the whole value. If the high byte is 0xff, we know the result is negative, otherwise the result is positive. Well I’m not 100% accurate it, but let’s keep things simple for matter of conversation. Anyway, it is enough to examine the most significant bit of temp to know its sign. So let’s take a look at the if statement again now that we have more knowledge about the case.

if temp[15] == 0 and temp > 127 : raise overflow

Suddenly it makes sense, huh? Because we assure the number is positive (doesn’t have the sign bit set) and the result is yet higher than 127, and thus cannot be represented as a sign value in a 8bits container.

Now, let’s examine its counterpart guard for negative numbers:

if temp[15] == 1 and temp < 128: raise overflow

Ok, I tried to fool here. We have a problem. Remember that temp is 16bits long? It means that if, for example, the result of temp after the division is -1 (0xffff), our condition is still true and will raise an overflow exception, where the result is really valid (0xff represents -1 in 8bits as well). The problem origin is in the signed comparison. By now, you should understood that the first if statement for a positive number uses an unsigned comparison as well, although temp is a signed value.

We are left with one option since we are forced to use unsigned comparisons, (my virtual processor supports only unsigned comparisons), then we have to convert the signed 128 value into a 16bits unsigned value, which is 0xff80. As easy as that, just signed extend it…

So taking that value and putting it in its place we get the following if statement:

if temp[15] == 1 and temp < 0xff80: raise exception

We know by now that temp is being compared to as an unsigned number. Therefore, if the result was a negative number (must be above 0x8000) and yet it was below 0xff80, then we cannot represent that value in a 8bits signed container, and we have to raise the division error exception.

Eventually we want to merge both if statements to be one, sparing some basic boolean algebra, we end up with:

if (temp > 0x7f) && ((temp < 0x8000) || (temp > 0xff80)):

then raise exception…